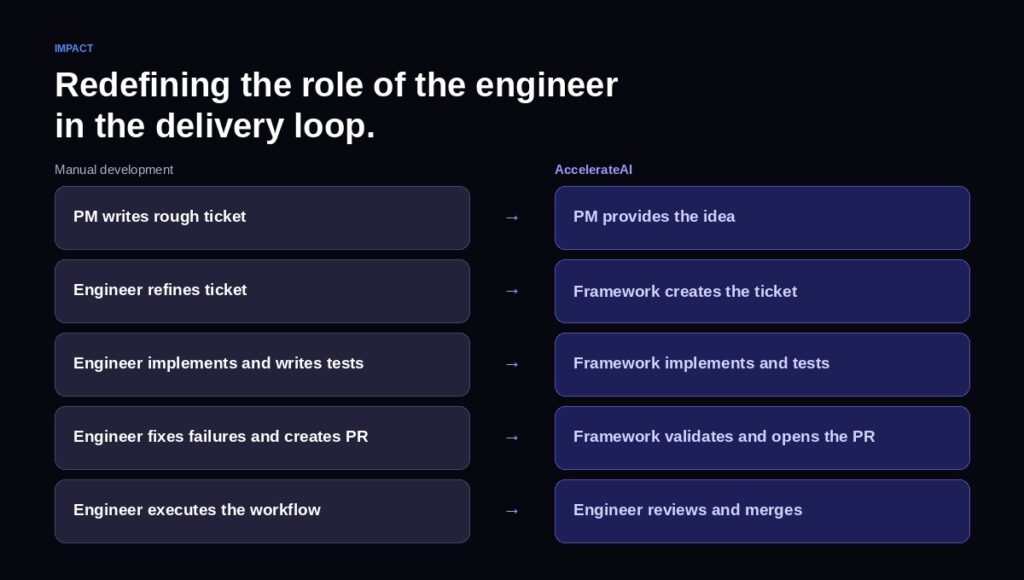

Most organizations have adopted AI coding tools. Copilots generate snippets, debug errors, draft boilerplate. But the delivery model itself remains untouched — the engineer still writes the ticket, decides the approach, runs the tests, fixes the failures, and manages the workflow end to end.

So the question worth asking is not whether AI can help with individual steps in that chain. It can. The question is whether AI can own the chain – executing continuously from rough input to validated pull request – while the engineer shifts from performer to reviewer. This perspective is what formed the basis of how we approached building AccelerateAI here at Qubika – this article explains how we did it, and how it works.

What AI execution looks like in practice

The AccelerateAI framework is built for exactly this model. It is not a copilot and it is not a code generation wrapper. It is an execution framework designed around Anthropic’s Claude Code, structured to carry work from idea through implementation with the engineer operating as reviewer, not executor.

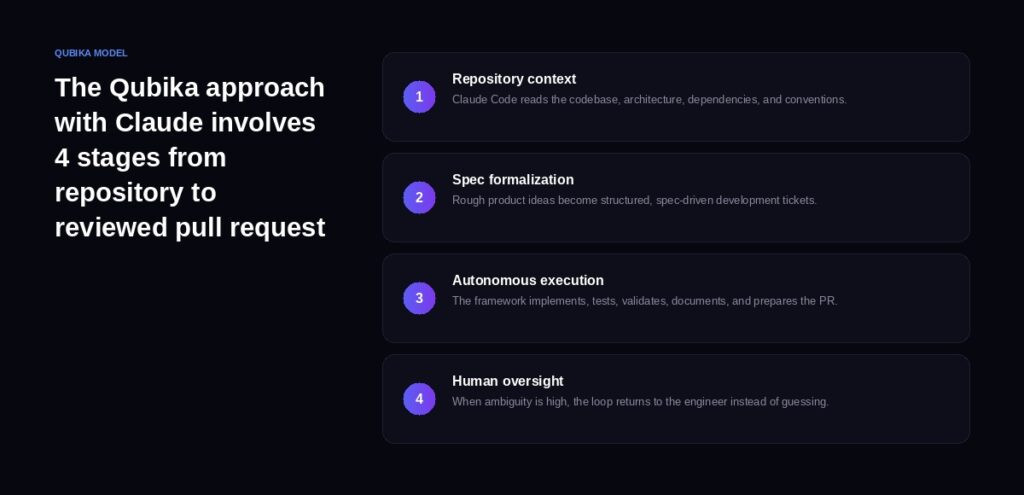

The framework follows a four-stage operator loop – automating where possible but involving human oversight and expertise when and where required.

- First, the frameworkit reads the repository – the codebase, architecture, dependencies, and coding conventions – and builds deep contextual awareness of the project it is working in. It uses four specialized agents developed by Qubika (“architect”, “DevOps”, “software engineer”, and “generalist”) that each analyze the project from their domain perspective and build a comprehensive contextual foundation. This is where the engineer is most involved: validating that the framework’s understanding of the project is accurate before any work begins.

- Second, it takes a rough input (a product idea, a Jira ticket, a prompt) and formalizes it into a structured, implementation-ready spec – again with engineer review, ensuring that ambiguity is resolved at the planning stage rather than during execution

- Third, it executes: planning the implementation, applying code changes, generating tests, validating results, updating documentation, and opening the pull request. Because the first two stages have front-loaded context and clarity, this phase runs autonomously from start to finish.

- Fourth, before any engineer sees the work, the framework runs a self-review loop – the agent reviews its own changes against the spec, the project guidelines, and the test results, catching issues and refining the pull request before it reaches human review. When ambiguity is too high to proceed confidently, it stops and returns to the engineer rather than guessing.

This is not a theoretical architecture. It is a working system that adapts to the specific stack, patterns, and conventions of each project. A React frontend, a Swift mobile application, and a multi-service backend each get different behavior because the framework configures itself around the codebase it finds – not the other way around.

Why the Anthropic stack matters

The choice to build on Claude Code is deliberate. Three capabilities make it uniquely suited for this kind of autonomous software execution.

- The first is deep codebase comprehension. The AccelerateAI framework, running on top of Claude Code, reads entire repositories and holds architectural context across multi-step execution chains. This is fundamentally different from tools that operate on individual files or functions in isolation.

- The second is structured reasoning at scale. Claude’s extended thinking enables the framework to plan multi-file implementations, anticipate side effects across system boundaries, and self-correct before opening a pull request. The reasoning is not hidden behind a chat interface – it drives the planning and validation layers of the framework directly.

- The third is enterprise-grade predictability. Anthropic’s approach to model safety translates into auditable, consistent behavior in production engineering workflows. When the system encounters genuine ambiguity, it escalates to the engineer rather than hallucinating a plausible-looking fix. For organizations where code quality and security are non-negotiable, this is the difference between a tool you experiment with and a system you trust.

The delivery model behind it

AccelerateAI does not operate in isolation. It runs inside Qubika’s AI-enabled engineering pods – nearshore teams where AI is a core element of delivery, not an afterthought bolted onto existing workflows.

Every tool and platform used within these pods has been reviewed by AI and security teams. Custom agents are built for project-specific productivity. Defined metrics and KPIs measure the impact of AI-augmented delivery against pre-AI baselines, so the value is visible and accountable.

The early results from Qubika’s methodology are concrete: 20% productivity gains in feature delivery, code reviews that are 200% deeper with zero unreviewed merges reaching production, 80% faster resolution when failures occur, and 65% more actionable suggestions surfaced to engineers during development.

These are benchmarks measured at the team level, refined continuously, and compared against baseline performance.

What this means for technology leaders

The implications for CTOs, VPs of Engineering, and technology-forward business leaders are worth spelling out plainly.

The engineer’s role is shifting. Not disappearing – shifting. The highest-value engineering work has always been judgment: deciding what to build, how to architect it, where the risks are, and what tradeoffs to accept. AI execution does not replace that judgment. It frees engineers to spend more of their time exercising it, by handling the mechanical execution that currently consumes the majority of their hours.

The delivery economics are changing. When the cost of going from rough input to validated pull request drops significantly – and the quality and consistency of that output improves – it changes how organizations think about roadmap capacity, team sizing, and time-to-market. Features that were deprioritized due to capacity constraints become feasible. Maintenance and technical debt work that was perpetually deferred becomes addressable.

The partnership model matters. AI execution is not something you adopt by purchasing a license. It requires a framework that understands your codebase, a methodology that measures impact, and engineering teams that know how to operate in the reviewer role rather than the executor role. This is a capability that needs to be built and operated, not simply enabled.

The question that matters now

The conversation about whether AI can help with software development is over. It can, and it does, and virtually every engineering organization has adopted some form of it.

The more consequential question is how much of the software delivery workflow can be confidently handed off to AI execution while preserving context, quality, and engineering control. Not all of it – but substantially more than most organizations have attempted.

AccelerateAI is Qubika’s answer to that question: a repository-aware, Claude Code-centered execution model that is autonomous where possible and human-guided where necessary. For technology leaders evaluating how to move from AI-assisted to AI-executed delivery, we believe the framework is worth a serious look.