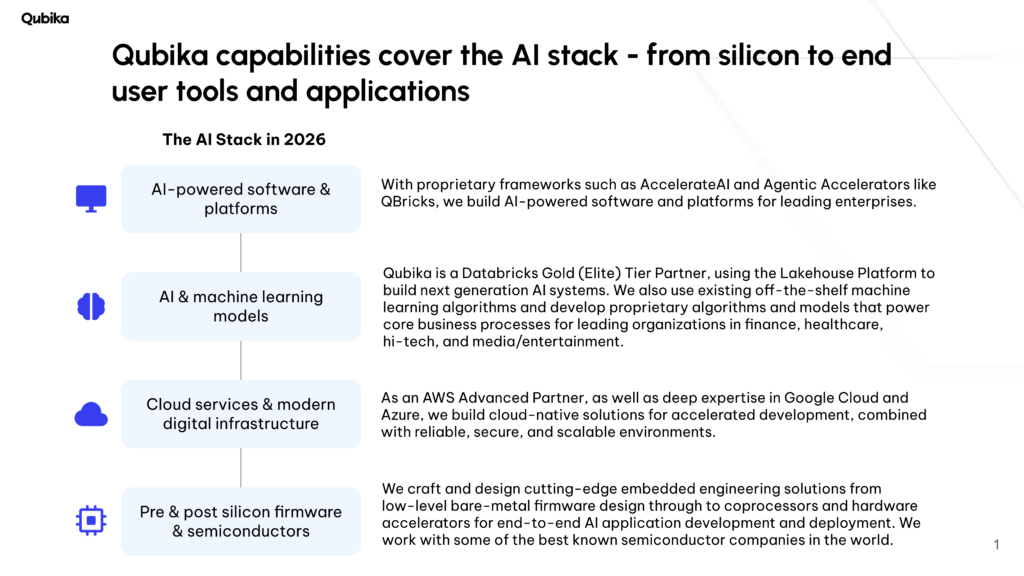

End-to-end capabilities are critical for organizations moving from digital-native to AI-native. Achieving meaningful impact with AI requires more than isolated models or tools – it depends on aligning data, infrastructure, and applications across the entire stack.

Over the past two decades, here at Qubika we’ve developed the ability to operate across this full spectrum, supporting organizations at different stages of their AI adoption. This includes everything from designing and building user-facing AI applications, to developing the data platforms and cloud architectures that power them, and extending down to low-level semiconductor and embedded engineering.

So in this article, I want to highlight each of these different areas of the AI stack, and how they each come together.

Pre & post silicon firmware & semiconductors

AI chip development is at a moment of major technological advances – specialized AI chips, optimized for workloads such as deep learning, natural language processing, and computer vision, are pushing performance and efficiency far beyond what general-purpose processors can achieve.

As industries demand increasingly complex AI applications, the evolution of chip design is becoming a critical driver of innovation.

Our Embedded Engineering Studio crafts and designs cutting-edge embedded engineering solutions from low-level bare-metal firmware design through to coprocessors and hardware accelerators for AI application development and deployment. Some of the solutions we are working on – for some of the world’s best known chip manufacturers, are so advanced they are several years away from being ready for release.

AI-powered software & platforms

Currently this is one of the most rapidly changing and competitively intense areas of the technology industry. New AI-powered tools and services are offering enterprises the chance to rethink how they operate, change processes, and offer different services to their customers.

Much of this innovation is being driven by the rapid advancements in AI foundation models, such as those offered by Meta’s Llama and Anthropic’s Claude. One of the key aspects here is that many of these foundation models are openly available for businesses to then build a proprietary solution on top of it – rather than invest huge sums of money to build your own.

Here at Qubika, we routinely use such models to underpin the custom AI systems and solutions that we build for our clients. One such example of the systems and agents that we are building is the Qubika Finance Analyst Agent – explore the demo below to see how it works.

AI & machine learning models

Via Qubika’s Data Foundation Pillar, we help organizations unlock the full value of their data. Our approach begins with identifying the right data sources – for example, in financial services this could include transaction histories and customer profiles to enriched third-party datasets such as Rapleaf for public customer data and Neustar for identity intelligence. By bringing these sources together, we can establish a comprehensive foundation for agentic AI, advanced analytics and machine learning.

One area of deep specialization is how our teams design and deploy machine learning algorithms built for real-world performance. We use both best-in-class off-the-shelf algorithms (such as XGBoost and logistic regression) as well as proprietary models tailored to specific business challenges. For example, we can leverage customer payment histories, credit bureau data, and transaction patterns to power risk scoring, fraud detection, or personalized financial services.

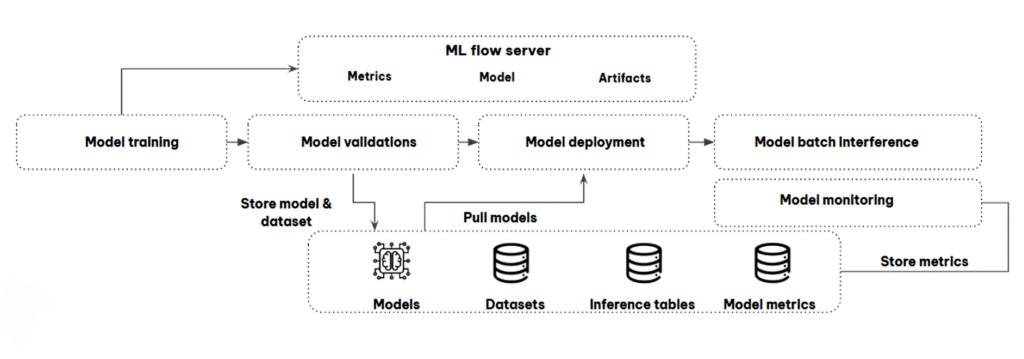

Building the model is only part of the solution. We also engineer the end-to-end systems required for production: model monitoring, performance dashboards, and automated reporting. These capabilities ensure that once deployed, models remain accurate, explainable, and aligned with business objectives over time.

Cloud services & modern digital infrastructure

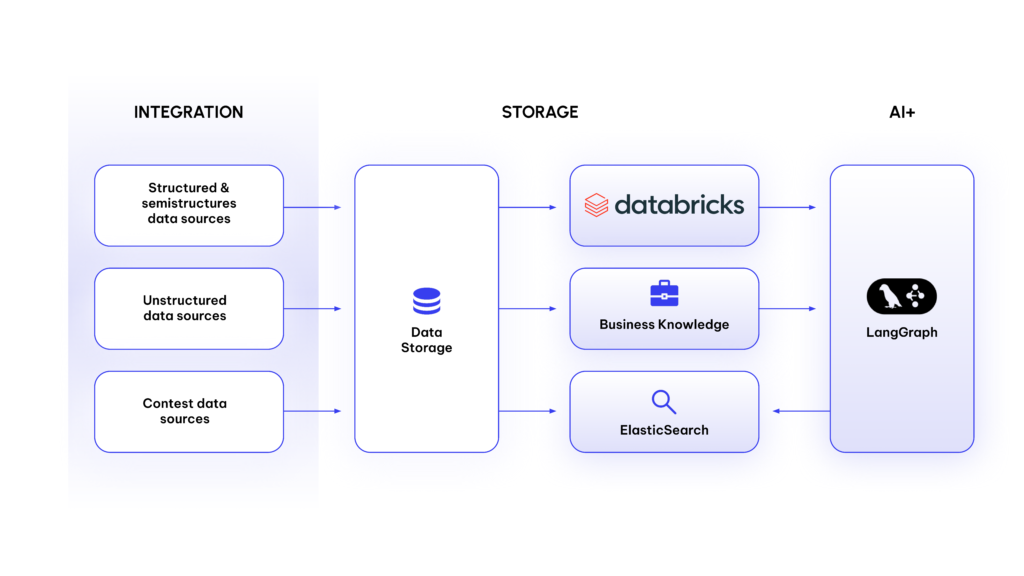

Cloud platforms are the critical component that underline AI systems – enabling them to scale to enterprise needs. That’s why, at Qubika, our strategic partnerships with Databricks – an Elite (Gold) Tier partner – and Amazon Web Services (AWS), where we hold an Advanced Tier designation, are central to helping our clients unlock the full potential of AI. As an AWS Advanced Partner, we enable enterprises to leverage cutting-edge infrastructure, while Databricks provides the unified data and AI platform to accelerate innovation. Together, these capabilities ensure that organizations can move seamlessly from experimentation to production.

At the same time, modern AI demands flexibility. Proprietary lock-in slows down adoption and limits creativity. By embracing interoperability, such as combining Databricks with frameworks like LangChain, we ensure that the AI models, data pipelines, and applications that we build can run across multiple environments.

In summary

As AI continues to redefine industries, success requires mastering the entire AI stack. At Qubika, we bring together the capabilities organizations need to thrive in 2025: from data engineering and cloud infrastructure, to machine learning development, to production monitoring and governance, all the way down to building the AI-specific chipsets. We integrate open-source frameworks, proprietary algorithms, and interoperable platforms like Databricks, AWS, and LangChain (LangGraph) to ensure flexibility, scalability, and long-term impact.